xiaohao New Contributor

New Contributor

2 months ago New Contributor

New Contributoragilex 7 Platform Designer PIO addr width

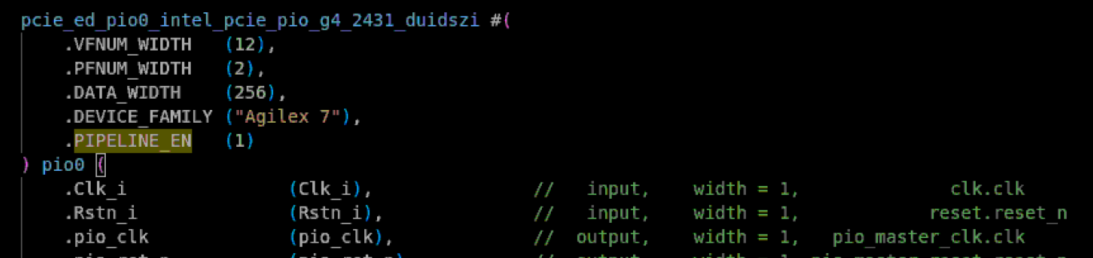

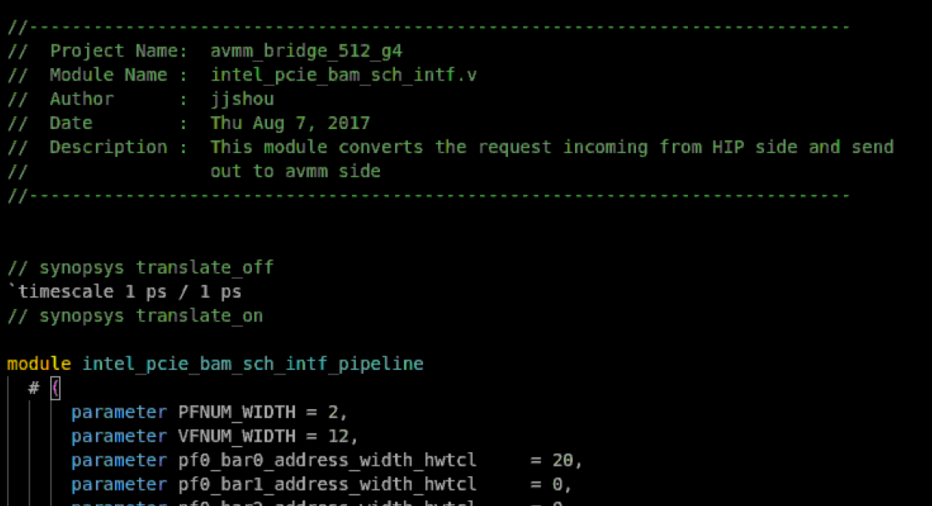

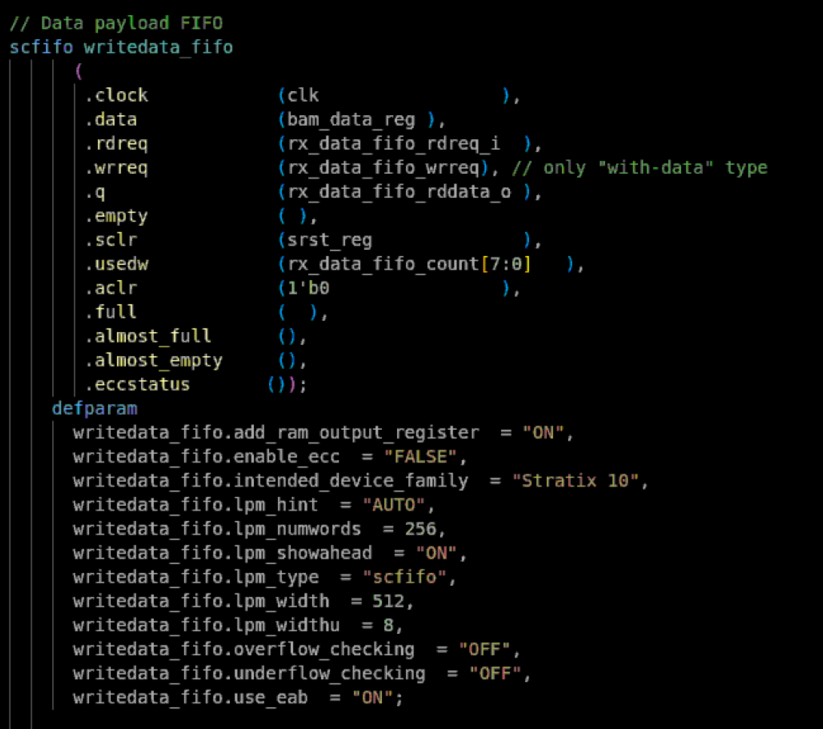

I am using the PIO example design of the P-Tile AVST PCIe IP on Intel Agilex 7. The original design maps a 16KB RAM to BAR0. Since my design requires a larger address space, I modified the PCIe IP's BAR configuration to increase BAR0 size to 2MB. However, I soon discovered that in the "PIO Design Example for PCI Express Gen4," the module converting AVST to AVMM only supports a 16KB address space after conversion. I then replaced it with the "PCIe PIO" IP core, but found that it can only access a 1MB region. What should I do?